A simple formatting syntax invented for bloggers has become the native tongue of artificial intelligence -- and understanding why changes how you work with these systems forever.

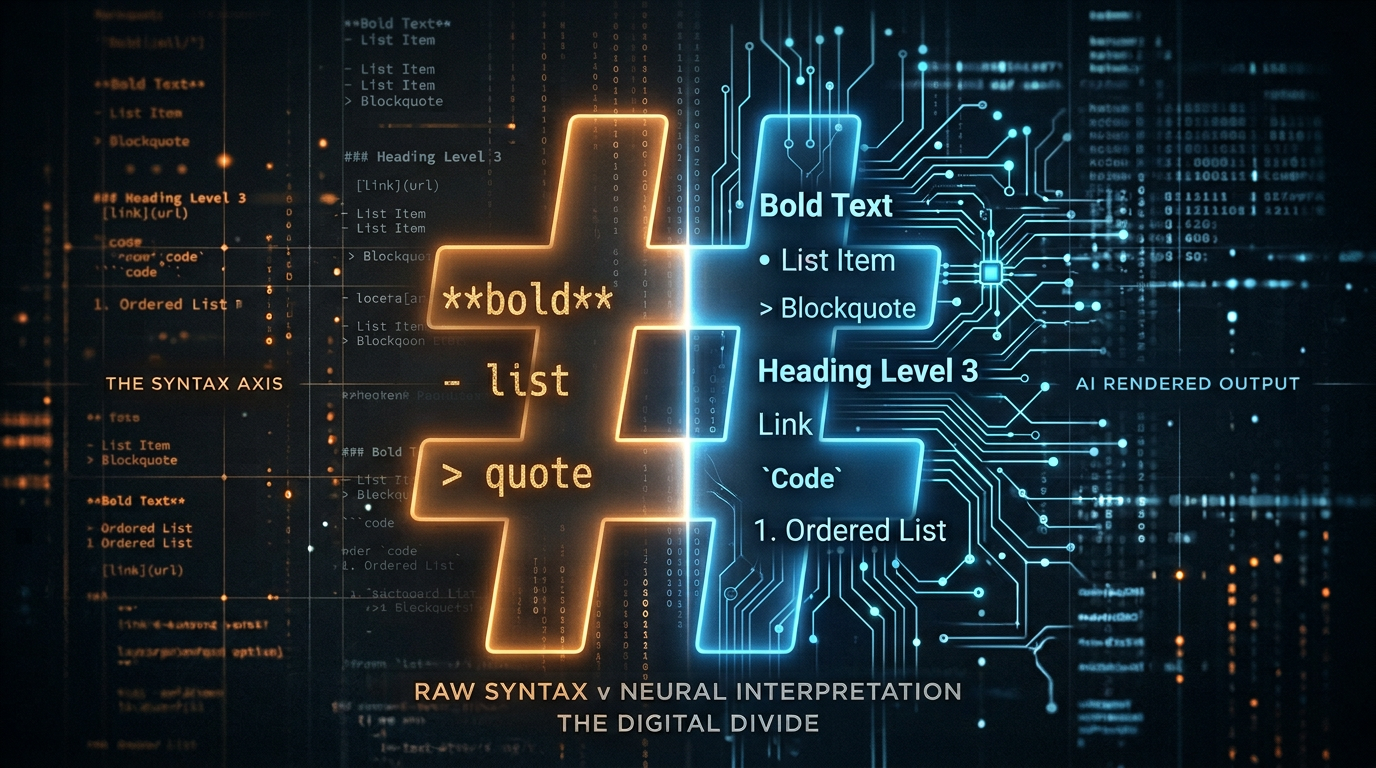

Every time you ask ChatGPT a question and it responds with bold headers and bulleted lists, you're witnessing something quietly remarkable. The AI isn't just generating text -- it's generating structured text in a 20-year-old syntax designed for bloggers who didn't want to learn HTML. Markdown, John Gruber's minimal formatting language from 2004, has become the de facto communication layer between humans and large language models.

This isn't a coincidence. And understanding the relationship between Markdown and AI will make you dramatically better at both writing prompts and interpreting outputs.

What Markdown Actually Is (And Why It Was Simple by Design)

Markdown was born from frustration. In 2004, John Gruber created Markdown -- a way to write for the web without wrestling with HTML tags -- with Aaron Swartz serving as his sounding board. Swartz had already built a related markup language called atx in 2002, and his influence shaped the direction of Gruber's thinking. Their solution was elegantly constrained: use punctuation characters that already look like what they mean.

# This looks like a heading

**This looks bold**

- This looks like a list item

> This looks like a quoteThe core insight was that plain text with Markdown formatting is readable even without rendering. A **bold** word in an email doesn't feel broken -- it still communicates emphasis. A # Header still reads as a title. This readability-without-rendering is the property that made Markdown accidentally perfect for AI.

Markdown's full specification fits on a single page. It covers:

- Headings via

#symbols (one through six levels) - Emphasis via

*asterisks*or_underscores_ - Lists via

-dashes or1.numbers - Code via backticks

`inline`or triple-backtick fences - Links via

[text](url)syntax - Blockquotes via

>arrows - Horizontal rules via

---

That's essentially it. No XML. No closing tags. No namespaces. Just punctuation and whitespace.

Why AI Models Learned to Think in Markdown

Large language models like GPT-4, Claude, and Gemini were trained on enormous corpora of internet text. And the internet, particularly after 2010, is soaked in Markdown. GitHub, Reddit, Stack Overflow, Discord, Slack, Notion, Obsidian, and tens of thousands of developer blogs all use Markdown as their native writing format.

When a model learns from billions of documents, it doesn't just learn facts -- it learns patterns of communication. And one of the most consistent patterns in high-quality technical writing is: structure your information. Use headings. Use lists. Use code blocks for code.

This is why AI models default to Markdown even when you don't ask them to. They've absorbed the implicit lesson that organized text is better text. A model trained on well-structured documentation learns that complex answers deserve hierarchy, that steps should be numbered, and that code should be set apart from prose.

The practical result: Markdown is now the language AI thinks in, even if it doesn't always render correctly on whatever surface you're using.

Markdown as a Prompt Engineering Tool

Once you understand that AI models are Markdown-native, you can use that to your advantage when writing prompts.

Structure Your Inputs

A prompt written with clear structure gets a more structured response:

## Task

Summarize this article in three bullet points.

## Constraints

- Each bullet must be under 20 words

- Avoid technical jargon

- Focus on business impact

## Article

[paste article here]Compare that to: "summarize this article in three bullets, keep each under 20 words, no jargon, focus on business impact." Both prompts contain the same information, but the formatted version is processed more reliably. The model sees a familiar pattern -- headers and lists -- and mirrors that organizational logic in its output.

Use Headers to Separate Context from Instructions

One of the most powerful prompt patterns is separating context from instruction with Markdown headings:

## Context

You are a senior copywriter for a SaaS company targeting mid-market CFOs.

## Instruction

Write a subject line for an email announcing our new expense reporting feature.The heading separation creates a clear cognitive boundary for the model. It knows where context ends and the task begins.

Code Fences Signal Literal Content

Triple backticks tell an AI model -- just as they tell a Markdown renderer -- that the enclosed content should be treated literally, not interpreted:

Rewrite the following SQL query to use a CTE:

SELECT u.name, COUNT(o.id)

FROM users u JOIN orders o ON u.id = o.user_id

GROUP BY u.nameWithout the fence, a model might try to "interpret" or paraphrase the SQL. The fence says: this is code, not prose.

How AI Is Changing Markdown Itself

The relationship isn't one-directional. AI is actively evolving how Markdown is used in the wild.

Markdown in AI Outputs Feeds Back Into Training

As AI assistants generate millions of Markdown-formatted responses that humans find useful and share online, those responses become training data for future models. The best AI-generated Markdown -- clear, well-structured, appropriately detailed -- reinforces those patterns. It's a feedback loop: good Markdown produces good AI output, which produces more good Markdown.

Extended Markdown Dialects

The AI era has accelerated adoption of Markdown extensions. GitHub Flavored Markdown (GFM) added tables, strikethrough, and task lists. AI systems have popularized even richer patterns:

- Nested lists with mixed types for complex hierarchies

- Collapsible sections (via HTML

<details>tags in Markdown) - Mermaid diagrams embedded in code fences for flowcharts

- Footnotes for citations in AI-generated research summaries

AI as a Markdown Converter

One of the most practical AI use cases is transforming unstructured text into Markdown. Meeting transcripts become structured notes. Dense paragraphs become scannable bullet points. Raw data dumps become formatted tables. The AI understands both what the content means and how to represent it structurally -- a combination that would have required a skilled editor just a few years ago.

The RAG Connection: Why Your Documents Need Good Markdown

If you work with AI systems beyond simple chat -- particularly Retrieval-Augmented Generation (RAG) systems that ground AI responses in your own documents -- Markdown quality directly affects answer quality.

RAG systems work by chunking your documents into pieces, indexing them, and retrieving relevant chunks to feed into an AI's context window when answering questions. Documents with good Markdown structure chunk cleanly. A heading followed by related content stays together. A bulleted list doesn't get split mid-point. Code blocks don't get fragmented across chunk boundaries.

Poorly formatted documents -- walls of text with no structure -- chunk arbitrarily, often cutting through the middle of ideas. The AI retrieves half an explanation, half a code example, and produces a confused answer.

The lesson: if you're building or using a document-based AI system, your document formatting is as important as your document content. Markdown isn't decoration -- it's metadata that tells chunking algorithms where ideas begin and end.

llms.txt: Markdown Meets robots.txt

The RAG insight has now been formalized into an emerging web standard. In September 2024, Jeremy Howard (co-founder of fast.ai) proposed llms.txt -- a Markdown file that websites place at their root, specifically designed for AI consumption.

The analogy is immediate: robots.txt told web crawlers where not to go. llms.txt tells LLMs what you want them to know about your site -- cleanly, without making them parse noisy HTML, JavaScript-rendered pages, or marketing fluff.

A minimal llms.txt looks like this:

# Acme Corp

> B2B invoicing software for small businesses.

## Docs

- [Getting Started](https://acme.com/docs/start): Installation and first invoice

- [API Reference](https://acme.com/docs/api): Full REST API documentation

- [Pricing](https://acme.com/pricing): Plans and billing FAQ

## Optional

- [Blog](https://acme.com/blog): Product updates and tutorialsThat's it. Plain Markdown. A heading, a one-line description, and a curated list of links with descriptions. The format was chosen deliberately because LLMs already understand it natively -- no parsing logic required, no schema to validate against.

Sites that adopt llms.txt are making a bet: that the next wave of traffic won't come from humans typing into search bars, but from AI agents researching on behalf of humans. An AI assistant asked to "find me invoicing software for my 10-person team" will reason better from a clean llms.txt than from crawling a homepage cluttered with animations and cookie banners.

The standard is still gaining traction -- adoption is real but far from universal. Early adopters include developer tools, documentation sites, and AI-native companies. If you run a website with content worth knowing about, publishing an llms.txt takes fifteen minutes and positions you ahead of the curve.

It's also a perfect illustration of the arc this article traces: Markdown started as a convenience for bloggers, became the training language of AI, and has now looped back as the deliberate interface from websites to AI. The format that made human writing machine-readable is now being used to make websites machine-comprehensible by design.

Practical Markdown for the AI Age

A few habits that pay dividends immediately:

Write your prompts like documents. Long, complex prompts benefit from headings, bullet constraints, and numbered steps. Treat the prompt as a specification, not a conversation opener.

Use code fences religiously. Any time you paste code, queries, JSON, or structured data into a prompt, wrap it in triple backticks with the language identifier. The model will handle it more precisely.

Request Markdown explicitly when you need it. If your output is going into a system that doesn't render Markdown, tell the AI: "respond in plain text, no Markdown formatting." Models will comply.

Export AI outputs as .md files. Most AI interfaces let you copy output as Markdown. These files are portable, renderable anywhere, and searchable. A year of AI-generated notes in a Markdown folder is a searchable knowledge base.

Learn the five-minute subset. You don't need to memorize every Markdown feature. Headers (#), bold (**), lists (-), code fences (```), and links ([text](url)) cover 95% of real-world usage.

The Bigger Picture

There's something almost poetic about Markdown's trajectory. Gruber designed it to be humanly readable -- to not look broken even when unrendered. That same property, readability in raw form, made it ideal for training data. Human-readable text is machine-learnable text.

As AI becomes the primary interface through which people create and process information, the ability to read and write structured text stops being a niche developer skill. It becomes a foundational literacy. Knowing how to express your thoughts in structured form -- not just for humans, but for the AI systems that increasingly assist, summarize, and amplify those thoughts -- is a leverage point most people are still ignoring.

Markdown is simple. That was always the point. But in the AI age, simple and structured is the most powerful combination available to anyone who writes anything.